|

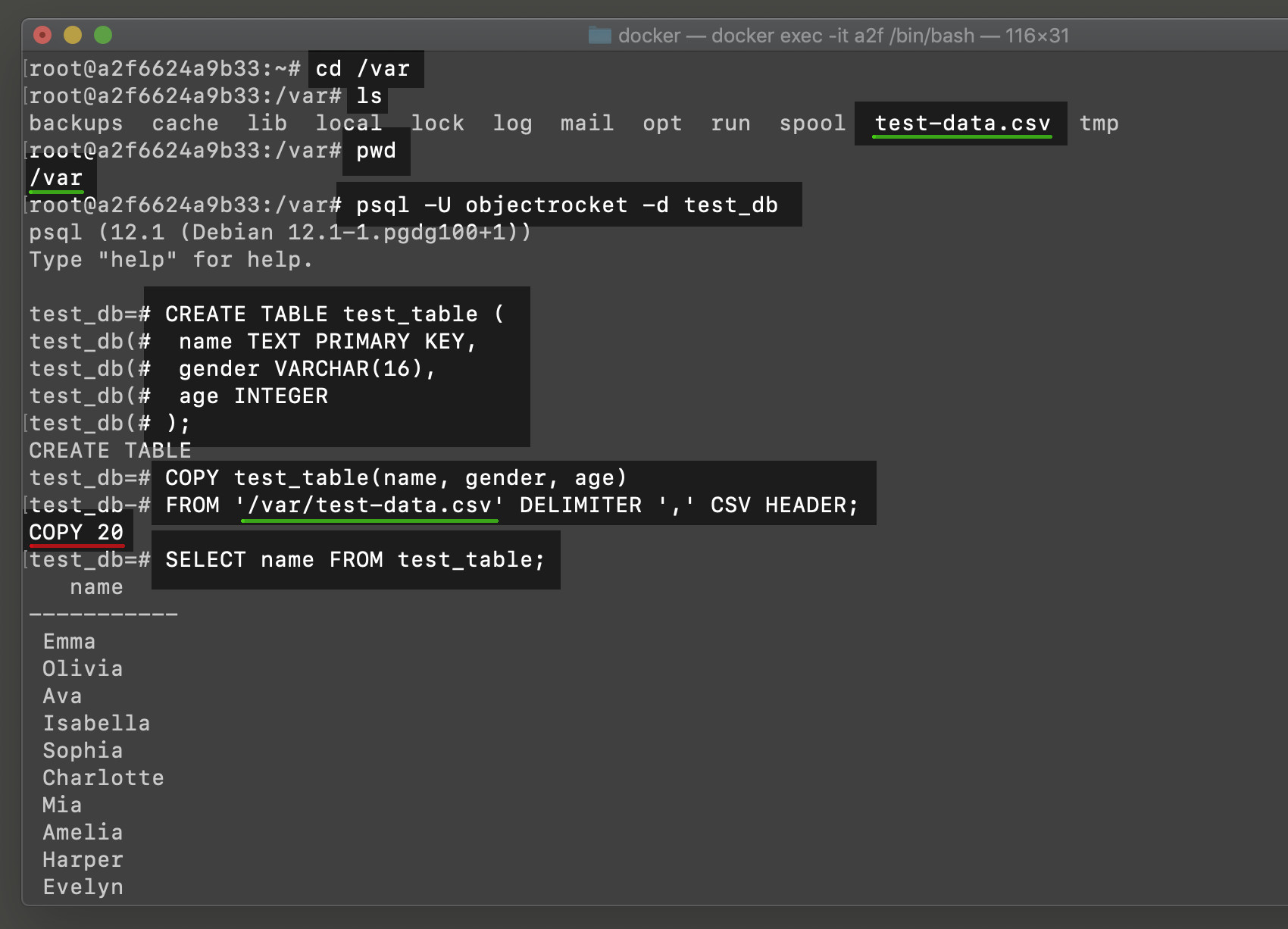

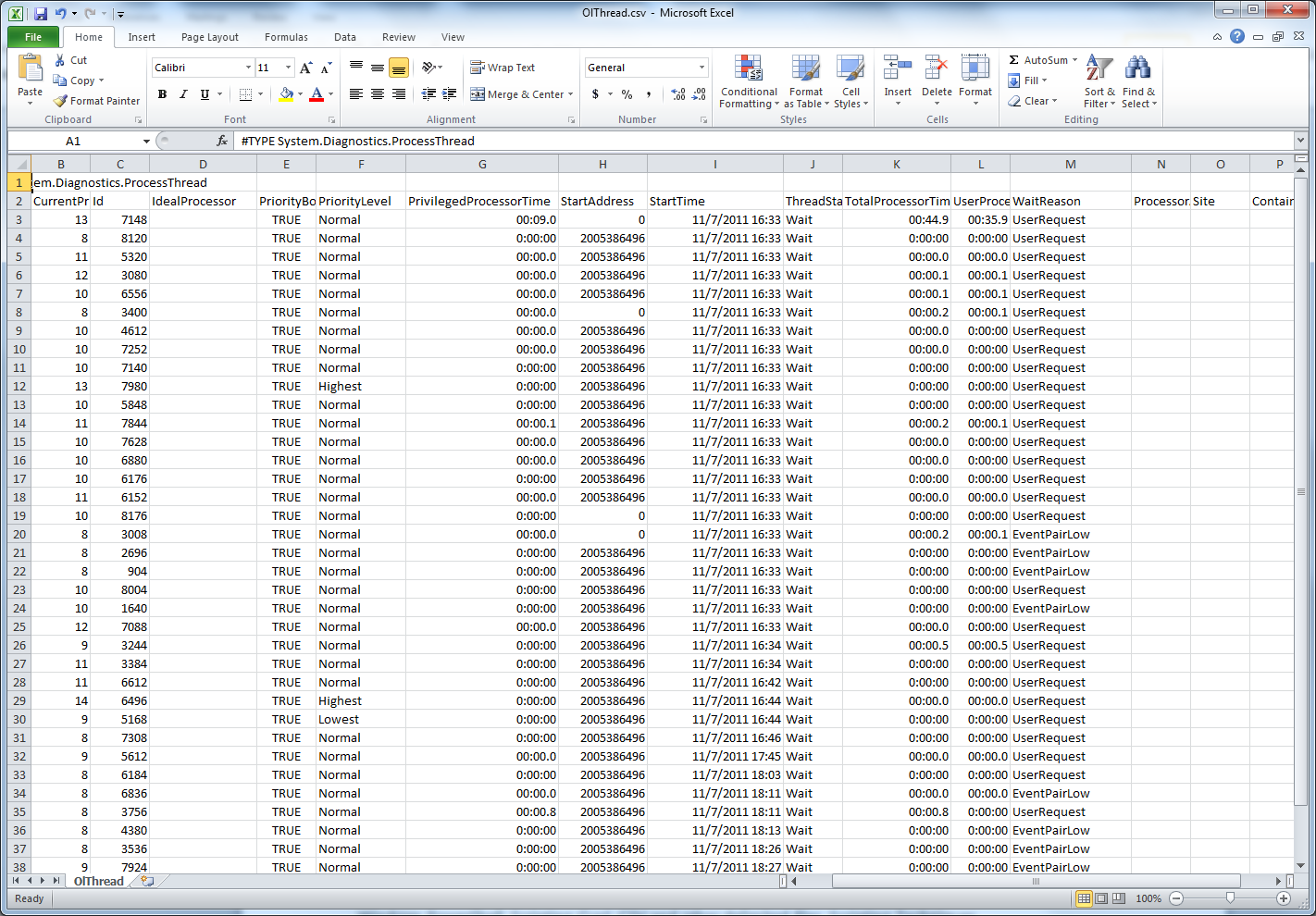

Define the foreign table that points to our CSV fileĬREATE FOREIGN TABLE foreign_sales_record ( Create file_fdw extension and foreign serverĬREATE SERVER file_fdw_server FOREIGN DATA WRAPPER file_fdw The foreign-data wrapper file_fdw, can be used to access data files in the server’s file system, or to execute programs on the server and read their output. We can also use the file_fdw to load data from CSV to PostgreSQL tables. \copy sales_record FROM '/Users/muhammadusama/work/data/5m_Sales_Records.csv' csv header Through file_fdw This means that file accessibility and privileges are those of the local user, not the server, and no SQL superuser privileges are required. ‘ \copy‘ is a psql operation that runs an SQL COPY command, but instead of the server reading or writing the specified file, psql (client) reads or writes the file and routes the data between the server and the local file system. COPY sales_record FROM '/Users/muhammadusama/work/data/5m_Sales_Records.csv' CSV HEADER Load using psql ‘\copy’ The former copies the table content to the file, while we will use the latter to load data into the table from the file. The copy command comes in two variants, COPY TO and COPY FROM. CREATE INDEX country_idx ON sales_record USING btree (country) Load using the COPY commandĬOPY moves data between PostgreSQL tables and standard file-system files. To keep things simple I created a sales_record table in PostgreSQL with one to one mapping with the CSV file CREATE TABLE sales_recordĪlong with that I also wanted to see the impact of having an index on the bulk load performance, So for tests that require an INDEX, I created a btree index on the country column. The sample CSV file contains 5 million rows, 14 columns and 624MB in size. shared_buffers = 2GBįor the purpose of this exercise, I downloaded a sample CSV file from with 5million rows.

I left most of the configuration parameter to their default values and only changed the below mentioned settings. Since the intention was to do a relative performance comparison among different data loading techniques and options, so using the personal MacBook Pro running macOS Catalena with 16GB of RAM, 2.7 GHz Quad-Core Intel Core i7 processor, and 500 GB SSD disk was good enough to serve the purpose.įor database I compiled PostgreSQL v12 from source code with default configure options. Moreover, I wanted to see the performance difference of COPY command, client-side copy command, loading through file_fdw, and pg_bulkload for each of the above options. In short I wanted to see the performance difference of loading the data into standard vs unlogged tables and want to compare the loading time difference between loading into table that has an index vs drop-index->load->recreate-index option. So I decided to do a simple comparison of bulk loading options and techniques. But one limitation with the copy command is that it requires the CSV file to be placed on the server.

Goto solution for bulk loading into PostgreSQL is the native copy command. I found my self doing the same few days back when I wanted to design a data ingestion process for PostgreSQL where we needed to bulk load around 250GB of data from CSV files every 24 hours.

More often than not the question is how much time would the bulk load would take. There are lots of options to do this but how would you decide which one to use. You have a file, possibly a huge CSV, and you want to import its content into your database.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed